AI Means Always Looking to the Future

Where's the line between forward-looking optimism and a rolling tomorrow that never arrives?

Hello! Thanks for reading The Smarter Image. I appreciate your attention. If you want to help support this newsletter, please recommend it to a friend. Thank you!

[This newsletter is 100% written by me, not AI.]

You know me, I get excited about all things related to photography and AI. Smart masking in Lightroom has fundamentally changed how I edit photos. Generative Fill can fix photos that a traditional Remove tool chokes on. Machine learning can help tag photos automatically and detect the contents of images, making them easier to locate later.

But sometimes it’s frustrating as hell, as I found out recently. And it reminded me that the nature of AI technology means we’re often left wanting, waiting for a future incarnation that will do what we want. This will work better next time/next month/next year.When next time arrives, it’s not quite what we expected, and so we reset our expectations and return to Well, it will certainly be improved next time/next month/next year!

Let me explain with an example.

The iPad Lock Screen

Normally I choose one of my photos to use as the lock screen on my iPad and leave it be, usually until the next season rolls around. At some point in the last few months I needed to test something and turned on the option to change the photo every hour, using only nature images from my library1.

That sounds a little chaotic, I’ll admit, but I liked it. The feature allowed me to see photos I hadn’t looked at in a while, and because it’s using machine learning to determine what’s in the image, it automatically masks the current time behind objects such as mountains or trees. It’s a neat effect. (Machine learning is also at work determining which photos are “nature”—don’t tell me Apple has been lagging in AI over the years.)

One of the photos that showed up recently was the black and white photo at the top of this post which I captured with an iPhone XS on vacation in Hawaii. It depicts a beach with trees and dozens of birds in silhouette scattering toward the sky. Seeing the photo took me back to that moment where my wife and I were having breakfast outdoors at a little seaside cafe.

The Search of Frustration

I wanted to view the image in my Photos library so I could share it on social media.2 Because it was shot with an iPhone, it was unlikely that I’d added keywords to it (you can do it in Photos, but it’s a pain). And I couldn’t immediately recall when the photo was taken.

No problem, though! The Photos app builds its own categories and associations based on what it recognizes in images. In the search field on my Mac, I typed “birds”. That brought up 429 photos…but none of them were this one.

I tried other descriptors. “Beach.” “Black and white.” “Bird.” “Ocean.”

None of them surfaced this photo.

More complicated queries like “black and white photo of birds on a beach” also failed.

Typing “Hawaii” also didn’t work, which surprised me because the iPhone XS would have automatically tagged the GPS coordinates.

The most frustrating part (and believe me, my frustration was steadily rising because I know this should work) was that I couldn’t really do anything to improve the results or work my way toward a solution. The semantic data that Photos pulls together is a black box that you can’t access. I couldn’t ask the app to re-scan the library.

How did I eventually find the photo? The old fashioned way: I scrolled until I saw photos from the same trip and then scanned through them to find this one.

And then I manually added keywords to it.

The Optimism for the Future

AI technologies are a swiftly moving target. Look at how far generative image creation has come in just the last two years, or how LLMs (large language models) have steadily improved just in the past year.

It’s reasonable that the answer to this search problem is to think, “surely the next iteration will do better.” That’s the whole point of machine learning. As the models ingest more images and get tuned to what those images contain, the better they will get at identifying things. I didn’t think “birds” or “beach” would be difficult concepts at this point, but apparently something about this photo didn’t tick any of the right boxes. We’re left hoping things will improve, without any indication of just how or when that will happen.

Now, this happened in the Apple Photos app, so perhaps the fault lies with Photos. However, I did the same searches in Lightroom desktop (which uses machine learning in Creative Cloud to scan and invisibly categorize images) and also did not come up with the correct image. It did, however, show one from the same morning, so I was able to view photos from the same date and find the right shot.

Natural Language Queries

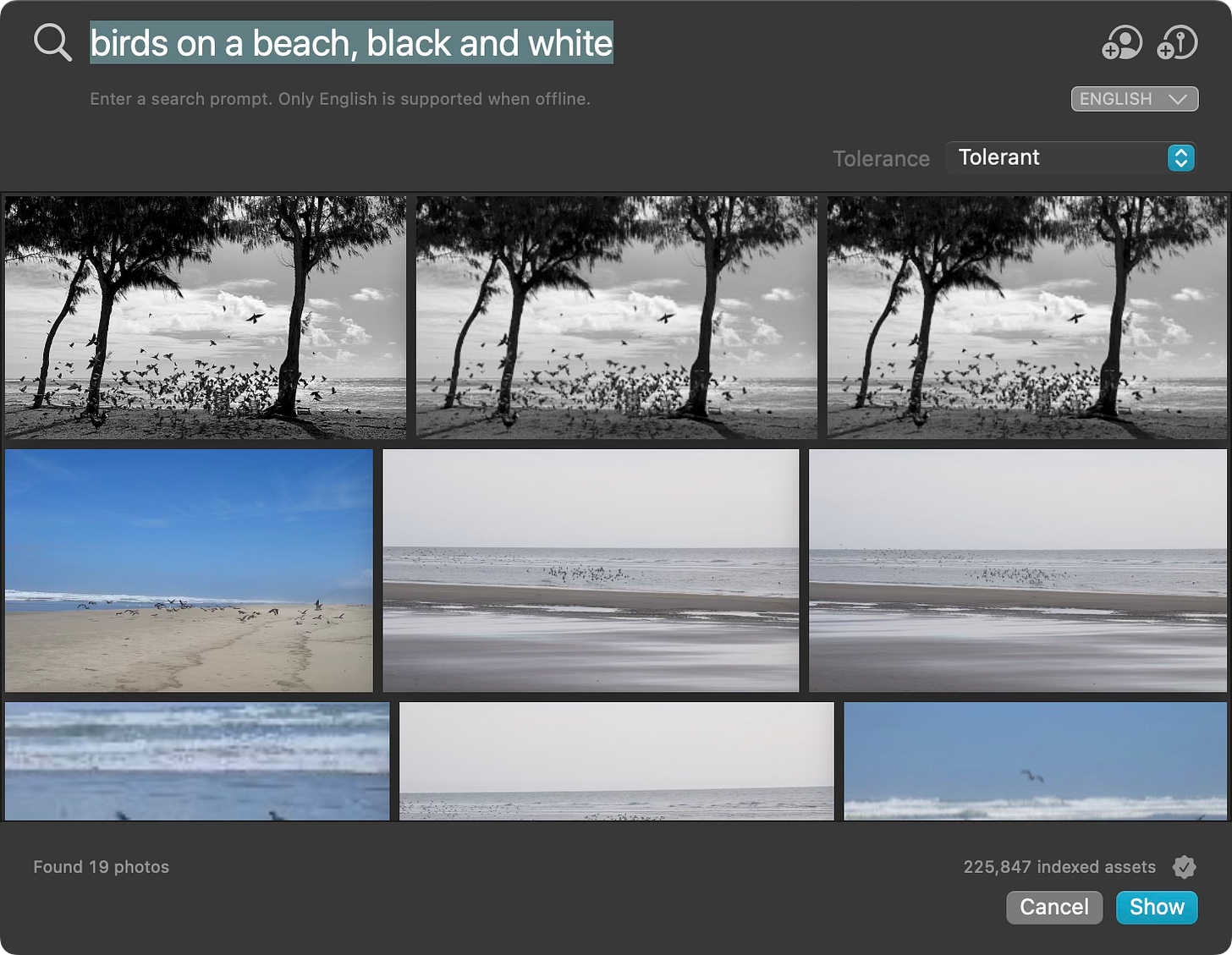

You know what worked almost immediately? Peakto. It uses its own AI implementation to analyze photos, but more importantly it understands natural language requests. With Photos I had to make multiple stabs using keywords, and when I tried to combine them I got zero results. In Peakto, I typed “birds on a beach, black and white” into its Conversational Search field and the image came up right away. (It also found the image in other libraries, since I copied it to my Lightroom library at some point. Peakto is great for hopping between libraries.)

Back to the Future

So I guess AI did finally deliver what I wanted; I was just looking in the wrong places. As William Gibson famously said, “The future is already here—it’s just not evenly distributed.” If I didn’t already know about and use Peakto, I likely would have stewed in my frustration and yearned for a yurt in the woods.

I said earlier that AI is a moving target, but that’s not quite right. AI is a moving target you can’t even see until it peeks out of an opaque box. When applied to photography, it can be frustrating but it can also be inspiring. And we get to experience it, whether we want to or not.

Let’s see what tomorrow brings.

Thanks again for reading and recommending The Smarter Image to others who would be interested. Send any questions, tips, or suggestions for what you’d like to see covered at jeff@jeffcarlson.com.

Find that on iPad or iPhone at Settings > Wallpaper. Add a new wallpaper and specify a Photo Shuffle style.

Only much later did I realize there’s a shortcut for this: Touch and hold the Lock Screen, tap Customize, tap Lock Screen, tap the More (…) button, and then tap Show Photo in Library. But for the sake of the overall point I’m making, we’re going to ignore all that for now.